Employee Commitment Surveys: How to Create, Measure, and Act on Results

by Ryan Stoltz

18 min read

Table of contents

- What is an employee commitment survey?

- Employee commitment vs employee engagement: Key differences

- Benefits of measuring employee commitment

- Essential employee commitment survey questions

- The recognition-commitment-alignment connection

- Tailoring commitment surveys for specific contexts

- How to create an effective employee commitment survey

- Analyzing employee commitment survey results

- Turning survey insights into action

- Survey tools and templates

- Frequently Asked Questions

- Conclusion

Share this article

If you need a clearer read on retention risk, loyalty, and employees' commitment to work, an employee commitment survey can give you evidence you can act on. But to be useful, the survey has to measure the right things, ask the right questions, and lead to decisions your leaders and managers can follow through on.

This article helps you understand what an employee commitment survey is, how it differs from an engagement survey, and how to measure employee commitment in a way that is practical and credible. You'll also find employee commitment examples, sample question types, guidance on analyzing results, and a step-by-step approach for turning feedback into action.

What is an employee commitment survey?

Unlike a satisfaction survey, which reflects how people feel about their job today, a commitment survey looks at the longer-term relationship between an employee and the organization. The distinction matters: someone can be satisfied in the short term while still planning to leave within a year.

The most widely referenced framework for understanding commitment comes from organizational researchers John Meyer and Natalie Allen, whose three-component model has shaped how HR teams design and interpret commitment surveys for decades.

Core components of commitment

- Affective commitment: The emotional connection an employee feels toward the organization. People high in affective commitment stay because they want to.

- Continuance commitment: The perceived cost of leaving, whether financial, social, or practical. People high in continuance commitment stay because they feel they need to.

- Normative commitment: A felt sense of obligation to the organization. People high in normative commitment stay because they feel they should.

To this we would add:

- Alignment with company values and goals: Whether employees see their own purpose reflected in the organization's direction.

- Job satisfaction: A key contextual signal that helps interpret the strength and nature of overall commitment.

Why commitment matters more than ever

- Commitment predicts voluntary turnover more reliably than overall employee satisfaction scores alone, making it a stronger early-warning signal for retention risk.

- Higher commitment correlates with discretionary effort, the work people do beyond their job description, because they genuinely care about outcomes.

- It reflects the overall quality of the employee-organization relationship, not just a snapshot of how a quarter went.

- For workforce planning, commitment data directly informs succession planning and helps protect institutional knowledge before it walks out the door.

Employee commitment vs employee engagement: Key differences

Commitment and engagement are related, but they measure different things. Using one employee survey when you need the other leads to misread data and interventions that miss the point. The distinction is worth getting right before you write a single question.

Engagement reflects how energized, involved, and absorbed employees feel in their current work. Employee commitment refers to how attached they are to the organization itself and whether they intend to stay. An employee can be highly engaged in their role while actively job searching. Another can feel deeply loyal to the company while finding day-to-day work draining. Both combinations are common, and both require different responses.

Research on the engagement-commitment relationship showsOpens in a new tab a moderate positive correlation between the two, but they are not interchangeable. Employees in the high-engagement, low-commitment state often represent your strongest performers and your highest flight risk.

When to measure each

- Use engagement surveys when diagnosing team-level performance, evaluating manager effectiveness, responding to a dip in productivity, or assessing whether job design is working.

- Use commitment surveys when facing elevated voluntary turnover, assessing the cultural impact of a reorganization, evaluating merger or acquisition integration, or identifying employees at risk of leaving before they decide to.

- Combine both when you need a complete organizational health picture and have the capacity to act on what you find.

- Track both over time. A consistent gap between high engagement and low commitment is an early signal that employees like the work but not the organization, a distinction that has real consequences for retention strategy.

Decision framework: when to use each survey type

| Scenario | Use a commitment survey | Use an engagement survey | Use both |

| Retention risk or high voluntary turnover | Yes | No | When the full picture is needed |

| Team performance issues | No | Yes | Occasionally |

| Manager effectiveness review | No | Yes | Occasionally |

| Culture change or reorganization | Yes | No | When the full picture is needed |

| Merger or acquisition integration | Yes | No | When the full picture is needed |

| Job design or role satisfaction concerns | No | Yes | Occasionally |

| Comprehensive organizational health review | No | No | Yes |

| Timing | Annually or biannually | Quarterly or pulse format | Coordinate cadences |

| Population | Entire organization | Can be team-specific | Entire organization |

Key Q12 elements related to commitment

The Gallup Q12Opens in a new tab is the most widely validated engagement instrument in use, and it measures engagement rather than pure organizational commitment. That said, several of its twelve items touch directly on factors that drive long-term commitment.

Gallup's decades of meta-analytic research link Q12 scores to concrete business outcomes, including profitability, productivity, turnover, and customer satisfaction. The Q12 is not a commitment survey, but understanding its structure is useful when designing one.

Lessons for commitment survey design

The Q12's durability offers a few practical lessons that translate directly to commitment survey design.

- Keep the survey focused. Twelve well-chosen items consistently outperform fifty loosely constructed ones.

- Use clear, behaviorally anchored language that employees can answer without interpretation.

- Prioritize items that address actionable workplace factors, not just how employees feel in the abstract.

- Build in validation over time. Benchmarking data becomes more valuable the longer it accumulates.

- Connect survey items to business outcomes from the start, so results can inform decisions rather than just describe them.

The broader takeaway: whether you're running engagement survey questions or a commitment-focused instrument, the quality of the questions matters more than the quantity. Design with precision, and you'll get data you can actually use.

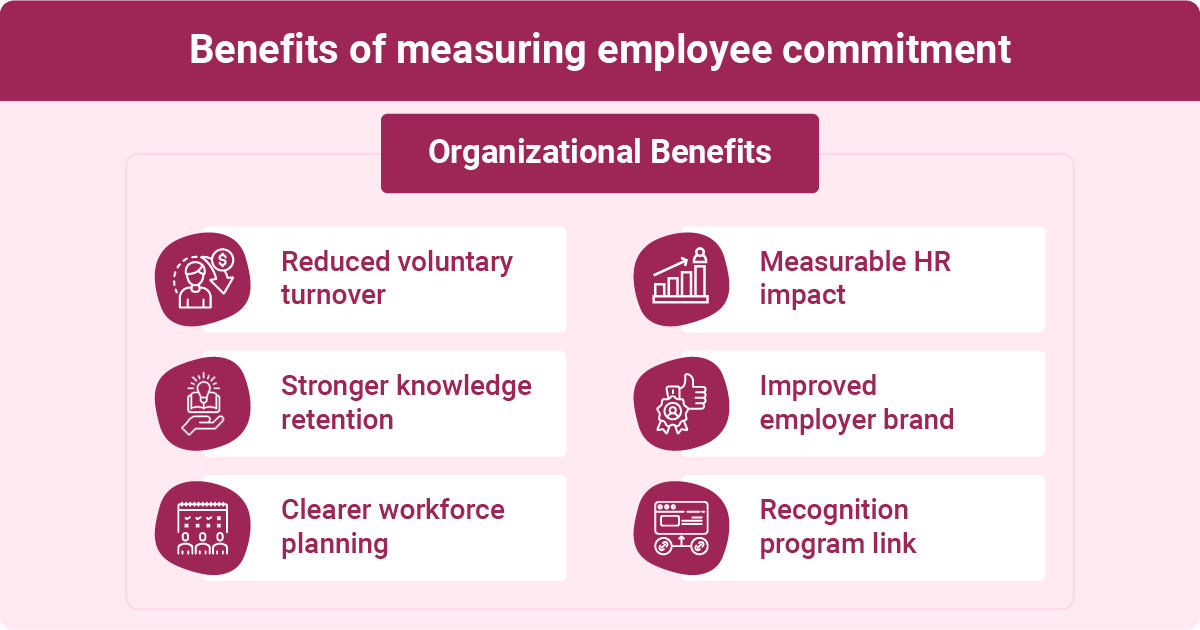

Benefits of measuring employee commitment

Measuring commitment isn't just a data exercise. It's an early warning system that tells you who's likely to leave, where cultural gaps are widening, and whether your HR investments are actually changing the employee-organization relationship. Without that data, retention interventions tend to be reactive, blanket, and expensive.

Organizational benefits

- Reduced voluntary turnover and the costs attached to it, which can run 50-200% of an employee's annual salary, depending on role complexity and seniority

- Stronger institutional knowledge retention, particularly among tenured employees who carry expertise that isn't documented anywhere

- Clearer signals for workforce planning and succession, so you're not guessing who's at risk before a critical project or transition

- A measurable baseline for evaluating whether HR programs are moving the needle over time

- Improved employer brand outcomes when employees who feel heard are more likely to become advocates rather than detractors

Research on recognition programs through the Workhuman® Cloud points to a meaningful link between cultures of recognition and turnover reduction, a finding that reinforces commitment measurement as a lever for managing workforce costs rather than simply tracking sentiment.

Employee benefits

- A visible signal that leadership is listening and willing to act on what they hear

- A structured channel for voice, which matters especially for employees who don't have direct access to senior decision-makers

- Evidence of organizational investment in their experience, not just their output

- More targeted professional development programs and conversations grounded in what employees actually value and need

Direct impact on business performance metrics

- Meta-analyses show committed employees demonstrate 20-30% higher productivity rates compared to their less committed peers.

- Organizations in the top quartile of commitment see 18-43% lower absenteeism.

- High commitment correlates with 10-20% higher customer satisfaction scores, a link that matters in any customer-facing function.

- Commitment predicts discretionary effort and organizational citizenship behaviors, the contributions that don't show up in a job description but shape team culture and output.

- Team-level commitment scores correlate directly with revenue per employee, making this a business performance metric, not just a culture one.

- High-commitment environments also see fewer safety incidents and quality defects, outcomes that carry real financial and reputational weight.

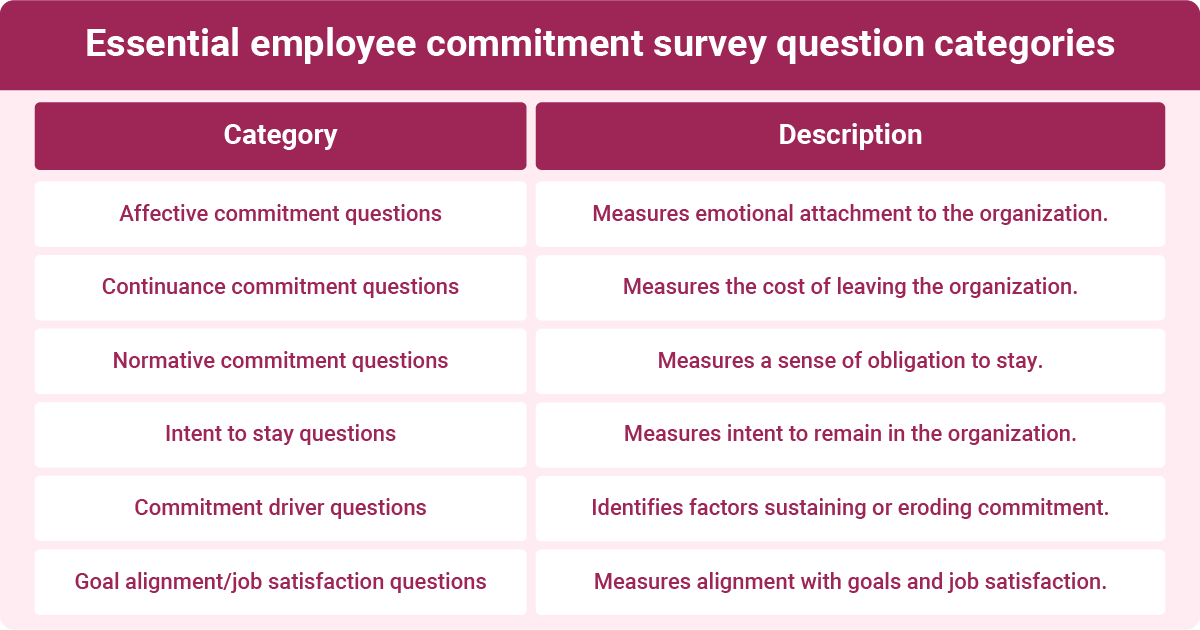

Essential employee commitment survey questions

The quality of your commitment survey lives or dies on question design. Vague items produce vague data. Well-constructed questions, built around the three components of commitment and grounded in validated research, give you something you can actually segment, benchmark, and act on.

Aim for 15 to 25 total survey questions to keep completion rates high without sacrificing depth. Mix Likert-scale items for quantitative tracking with a small number of open-ended questions to capture the context behind the scores.

Affective commitment questions

These items measure emotional attachment. Strong affective commitment is your best indicator that an employee wants to be there, not just that they feel stuck.

Continuance commitment questions

These items reveal whether employees are staying by choice or by calculation. High continuance commitment paired with low affective commitment is a retention risk hiding in plain sight.

- It would be difficult for me to leave this organization right now.

- I have invested too much in this organization to consider leaving.

- Leaving would require a significant personal sacrifice.

- I have a few alternative job options available to me.

Normative commitment questions

Normative commitment captures felt obligation. It often reflects how fairly the organization has treated the employee and whether they feel a sense of reciprocity.

- I feel an obligation to remain with this organization.

- This organization deserves my loyalty.

- I would feel guilty if I left this organization now.

- I owe this organization a great deal.

- I would recommend working here to others.

Intent to stay questions

Direct intent-to-stay items are among the strongest predictors of actual turnover. Include reverse-scored items to catch social desirability bias.

- I plan to be working here in one year.

- I frequently think about quitting. (reverse scored)

- I am actively looking for jobs outside this organization. (reverse scored)

- I would recommend this organization as a great place to work.

Commitment driver questions

These items identify what's sustaining or eroding commitment, giving managers and HR leaders levers to pull rather than just a diagnosis.

- My manager actively supports my professional development.

- I see clear opportunities for advancement within this organization.

- My work gives me a meaningful sense of purpose.

- I feel recognized for the contributions I make.

- Leadership communicates a compelling direction for the organization.

Recognition surfaces here deliberately. When employees feel consistently seen and valued, their emotional attachment to the organization deepens. That connection between recognition and commitment is one of the clearest patterns in workforce research.

The recognition-commitment-alignment connection

There's a reason recognition keeps appearing in commitment research. It's not just that appreciated employees feel better about their jobs – it's that recognition is one of the only mechanisms organizations have for making values and strategy visible at the individual level, in real time.

Workhuman's 2025 Global Research Study on alignment, conducted across 2,500 employees in five countries, found that employees in companies with a recognition program are 23% more likely to feel aligned with organizational goals.

When that recognition is explicitly connected to strategic initiatives, workers are 129% more likely to understand how their daily work contributes to company priorities – and 5x more likely to feel personally invested in achieving them.

Recognition doesn't just reward past behavior; it teaches people what the organization values. Each moment of recognition is, in effect, a signal: this is what good looks like here. Over time, those signals build the psychological safety that allows alignment to stick. In fact, employees who received recognition within the past month report psychological safety scores 21% higher than those who hadn't been recognized recently.

When that recognition is explicitly connected to strategic initiatives, workers are 129% more likely to understand how their daily work contributes to company priorities — and five times more likely to feel personally invested in achieving them.

For commitment survey designers, this points to something worth building in explicitly: questions that measure not just whether employees feel recognized, but whether they understand why they were recognized and how it connects to what the organization is trying to do.

That distinction — between recognition as a pat on the back and recognition as a strategic signal — is often where commitment programs either take hold or plateau.

Goal alignment and overall job satisfaction questions

These items provide context for interpreting the rest of the survey. An employee who scores low on affective commitment but high on mission alignment is a different retention problem than one who scores low on both.

- I believe in the purpose and mission of this organization.

- I am satisfied with the role I currently have.

- My day-to-day work reflects what I care about most professionally.

Pair these with an open-ended item such as: "What would make you more likely to stay with this organization long-term?" That single question often surfaces what Likert scales miss.

Validated survey instruments: TCM and OCQ

If you need data that holds up under scrutiny, whether for internal benchmarking, academic research, or board-level reporting, lean on validated instruments rather than building from scratch.

| Instrument | Authors | Items | What it measures | When to use it |

| Three-Component Model (TCM) Employee Commitment Survey | Meyer & Allen | 18 to 24 items | Affective, continuance, and normative commitment | Research, benchmarking, or when cross-industry comparison is a priority |

| Organizational Commitment Questionnaire (OCQ) | Porter et al. | 15 items | Identification with and involvement in the organization | Foundational commitment tracking; particularly useful for longitudinal measurement |

Both instruments have been validated across decades and cultures, making them reliable starting points. Academic versions are available at no cost through published research. Some commercial applications require licensing.

For most internal diagnostic purposes, you can adapt the item structure and language from validated scales while customizing wording to fit your organization's context. If your goal is peer-reviewed research or formal benchmarking, use the validated versions as written.

Tailoring commitment surveys for specific contexts

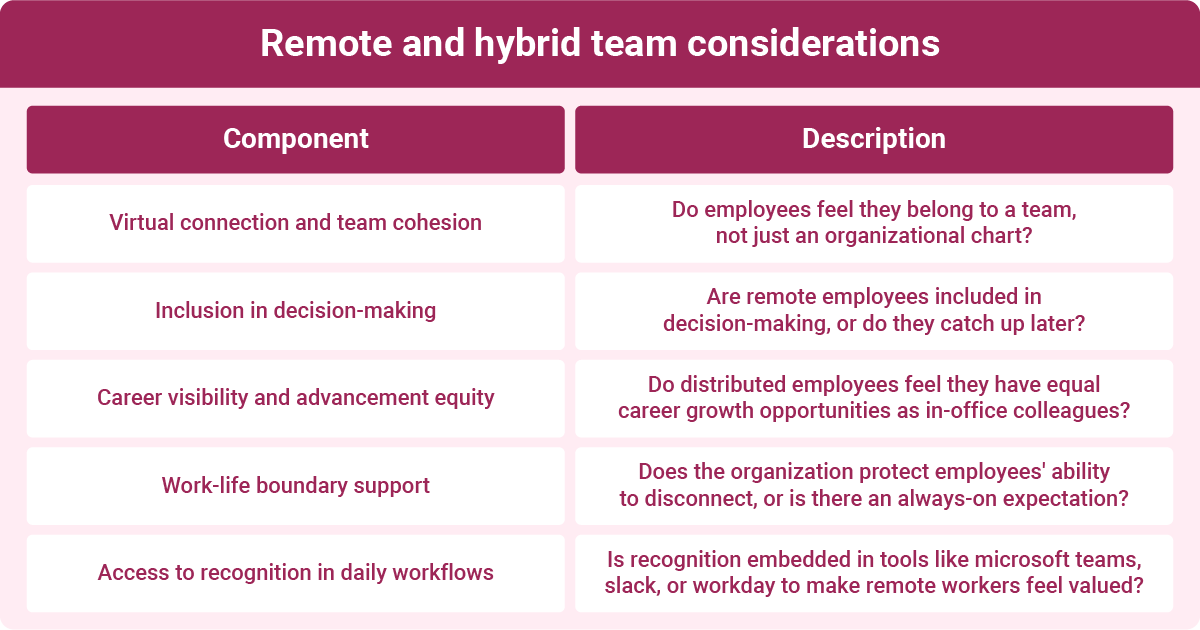

Remote and hybrid team considerations

Remote and hybrid employees face a specific commitment risk: out of sight can mean out of the organizational relationship. Research consistently shows that reduced human connection is a primary driver of disengagement and weakened organizational attachment in distributed environments.

Tailor your commitment survey for remote and hybrid teams by adding questions that surface what standard instruments tend to miss:

- Virtual connection and team cohesion: Do employees feel like they belong to a team, not just an org chart?

- Inclusion in decision-making: Are remote employees part of conversations that shape their work, or are they catching up after the fact?

- Career visibility and advancement equity: Do distributed employees believe they have the same career growth opportunities as colleagues who are in the office more often?

- Work-life boundary support: Does the organization actively protect employees' capacity to disconnect, or does remote work mean always-on expectations?

- Access to recognition in daily workflows: When recognition isn't embedded in the tools employees already use, distributed workers often feel invisible. Workhuman® Cloud integrations with platforms like Microsoft Teams, Slack, Outlook, and Workday help make recognition a natural part of the remote workday, which is worth measuring directly in a commitment survey for distributed teams.

Small business adaptations

Smaller organizations don't need a 24-item validated instrument to get useful commitment data. What they need is a focused, honest set of questions that reflect the realities of a smaller workforce.

- Shorten to 10 to 15 items. Response fatigue hits harder when your team is 30 people, and everyone knows who gave what feedback.

- Skip the segmentation-heavy analysis. With smaller sample sizes, focus on themes rather than demographic breakdowns to protect anonymity.

- Lean on open-ended questions. In small teams, a single follow-up prompt often surfaces more than a full Likert battery.

- Prioritize questions about leadership access, professional growth opportunities, and mission alignment, the commitment drivers most distinctive to smaller organizations, where culture is often set from the top.

How to create an effective employee commitment survey

A well-designed survey produces data you can act on. A poorly designed one produces noise that erodes confidence in the entire process. The steps below walk you through a complete design-to-deployment cycle, from setting objectives to piloting the instrument before it goes live.

Step 1: Define your objectives

Start here. Without a clear objective, every other design decision is guesswork.

- Decide what question you're trying to answer. Are you assessing organization-wide retention risk? Measuring cultural impact after a reorganization? Identifying commitment gaps among specific teams or job levels?

- Set success metrics before you write a single question. What would a meaningful change in scores look like? What score thresholds would prompt an action?

- Identify stakeholders who need to act on results. If your CHRO and department heads won't see this data, it won't drive anything. Align upfront on who owns the follow-through.

- Define your timeline. A realistic cycle from design to results communication typically runs six to ten weeks. Build that in from the start.

Step 2: Select and customize questions

Use validated instruments as your foundation, then adapt.

- Start with a validated framework such as the TCM Employee Commitment Survey or the OCQ, both covered in the previous section, to anchor your item set in established research.

- Select 15 to 25 items across the components that matter most to your current objective. If retention risk is your primary concern, weight toward affective commitment and intent-to-stay items.

- Add two to three open-ended questions to capture context that Likert scales miss. A single prompt like "What would make you more likely to stay long-term?" often surfaces your most actionable insight.

- Review items for clarity. Industrial-organizational psychology research consistently finds that double-barreled questions, abstract language, and leading phrasing distort responses. Each item should address one idea, use plain language, and avoid suggesting a preferred answer.

- Build in reverse-scored items to reduce social desirability bias and catch respondents who are clicking through without reading.

Step 3: Choose your survey platform

The platform matters less than the process, but a few criteria are worth evaluating.

For broader guidance on choosing the right infrastructure, Workhuman's® employee survey guide covers platform selection considerations alongside survey design fundamentals.

Step 4: Communicate and deploy

How you launch the survey shapes whether employees take it seriously.

- Send a message from senior leadership before the survey opens. Employees need to believe results will be used, not archived.

- Be specific about purpose, timing, and confidentiality. Vague communication breeds skepticism and suppresses employee participation.

- Keep the survey window open for 7 to 10 business days, long enough to accommodate varied schedules, short enough to maintain momentum.

- Send one reminder at the midpoint. More than two reminders tend to signal anxiety about low participation, which doesn't inspire confidence.

Step 5: Pilot test

Don't skip this step. A pilot catches problems that internal review misses.

- Run the survey with a small, representative group of 10 to 15 employees before full deployment.

- Measure completion time. If the survey takes longer than 12 minutes, cut items.

- Ask pilot participants whether any questions felt confusing, uncomfortable, or redundant. Their feedback improves response quality for the full deployment.

- Review response distributions from the pilot. If most items cluster at one end of the scale, your questions may be leading, or your scale may need adjustment.

Direct feedback mechanisms

Survey data gives you breadth. Direct feedback gives you depth. Use both.

- Schedule optional follow-up conversations with employees who volunteer to share more after the survey closes.

- Build manager-level debrief sessions into your action planning calendar, so team-level context doesn't get lost between the survey results and the organization-wide rollup.

- Create a visible feedback loop by sharing what you heard and what you're doing about it, before the next survey cycle opens. That single step does more for future response rates than any survey design improvement.

Analyzing employee commitment survey results

Collecting data is the easy part. What matters is how you move from raw scores to a clear picture of where commitment is strong, where it's eroding, and what to do about it. A structured analysis workflow keeps you from drowning in numbers or jumping to conclusions before you've seen the full pattern.

Key metrics to calculate

Start by building your core data layer before you interpret anything.

- Overall commitment index: Calculate the average score across all items to establish a single organization-wide baseline. This is your headline number.

- Dimension scores: Break the index into affective, continuance, and normative sub-scores. A high overall score that masks low affective commitment tells a very different story than a balanced one.

- Response rate by segment: Low response rates in specific departments or tenure bands are data in themselves. A team with 40% participation may be telling you something before you've read a single answer.

- Distribution analysis: Look beyond averages. A mean score of 3.6 out of 5 means something different when most responses cluster at 4.0 versus when they're split between 2.0 and 5.0.

- Year-over-year trends: If you've run this survey before, trend lines matter more than point-in-time scores. A steady decline in affective commitment across two cycles is a more urgent signal than a single low score.

- Complementary metrics: Pull in eNPS (Employee Net Promoter Score) as a loyalty signal, voluntary turnover rates to connect survey findings to real exit behavior, and absenteeism data as a secondary indicator of commitment trends.

Segmentation analysis

Don't stop at the organization-wide number. That's where patterns hide.

Driver analysis

Once you have scores by dimension and segment, the next question is: what's actually driving commitment or undermining it?

- Run correlation or regression analysis to identify which survey items most strongly predict your overall commitment index. This ranks your potential interventions by likely impact.

- Look for high-importance, low-scoring areas: the items employees say matter most but rate lowest. Those are your priority levers.

- Don't assume the obvious factors are the most powerful. Recognition, manager quality, and career visibility are consistently strong commitment drivers, but their relative weight varies by workforce segment. Let the data confirm the priority.

Qualitative analysis

Quantitative scores tell you where the problem is. Open-ended responses tell you why.

- Code written responses for recurring themes using a simple tagging system, even a manual one works for smaller datasets.

- Surface themes that don't map neatly to your Likert items. Employees often name factors your survey didn't directly ask about, and those gaps are worth knowing.

- Use direct quotes to illustrate what the numbers suggest. A score of 3.2 on belonging means more to a senior leader when it's paired with three representative employee voices.

- Note emotional intensity in written responses. The difference between "I don't feel recognized" and "I haven't been recognized once in two years" is meaningful and shouldn't be flattened by coding alone.

Benchmarking your results against industry standards

Context makes scores meaningful. Without benchmarks, a 3.7 out of 5 may look like a passing grade when it might be significantly below what comparable organizations are seeing.

Industry-level commitment scores vary by sector. Research suggestsOpens in a new tab healthcare typically scores higher on commitment dimensions (around 4.1 out of 5) than retail (around 3.5), with technology falling in between (around 3.8). Those differences reflect workforce composition, job design, and cultural norms as much as organizational effectiveness, so treat sector benchmarks as context, not judgment.

A few benchmarking principles worth holding to:

- Use percentile rankings rather than raw score comparisons when working with external benchmarks. Knowing you're in the 60th percentile is more actionable than knowing your score is 3.7.

- Sources for external benchmark data include SHRM reports, academic meta-analyses, industry consortiums, and established survey vendors. Cross-check methodology before drawing comparisons, since scale design and population affect comparability.

- Internal benchmarking over time typically provides more valuable insights than external comparisons. A consistent 0.3-point drop in affective commitment over three survey cycles is a more reliable signal than a one-time comparison against a sector average from a different year.

- High-performing organizations generally score 4.0 or above on five-point commitment scales. Use that as a directional target, not a ceiling.

If you're managing commitment data across departments or regions and need more analytical depth, tools like Workhuman® iQ can surface patterns, flag emerging retention risks, and pair findings with recommendations, reducing the time between data collection and informed action.

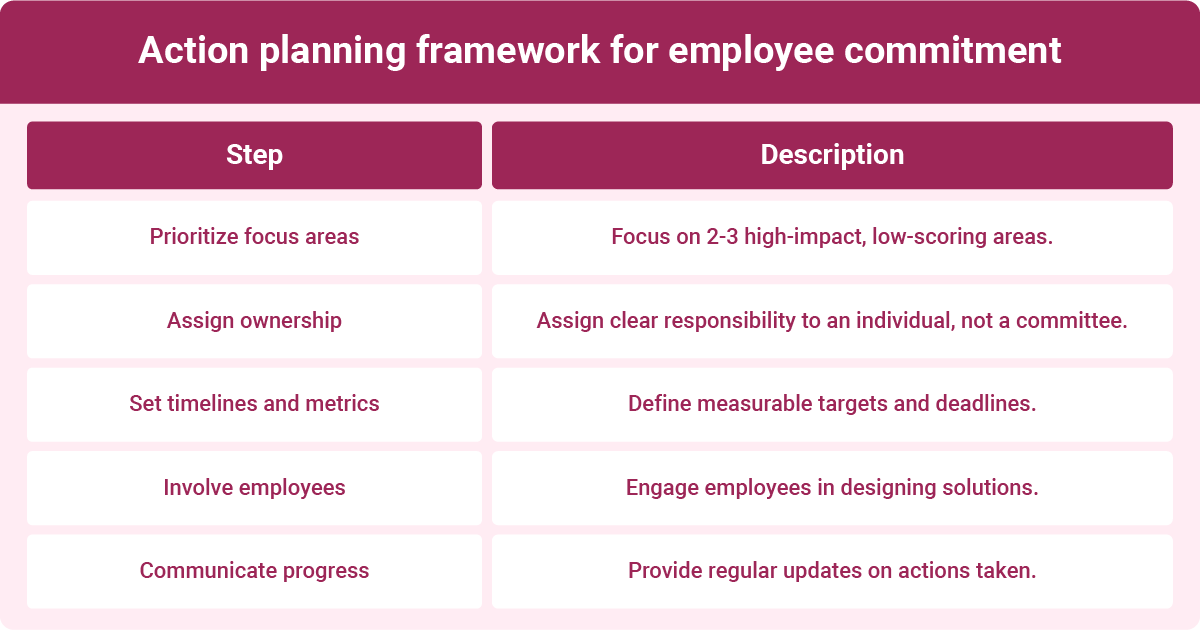

Turning survey insights into action

Surveys that don't lead to visible change do something worse than nothing: they teach employees that sharing feedback is pointless. Research on the survey-action gap consistently shows that when employees don't see results acted on, they're less likely to participate honestly in future cycles and more likely to interpret the next survey invitation as performative. The data you collected only has value if it moves something.

Here's how to close that gap with a disciplined, accountability-focused process.

Results communication best practices

Before any action planning begins, share what you found. Transparency here isn't just good practice; it's the foundation for everything that follows.

Action planning framework

- Prioritize two to three focus areas. Trying to fix everything simultaneously means fixing nothing. Use your driver analysis to identify the highest-impact, lowest-scoring areas, then commit to those with intention.

- Assign clear ownership. Every focus area needs a named person responsible for progress, not a committee. Accountability diffuses quickly when ownership is shared equally by everyone.

- Set timelines and success metrics upfront. Define what improvement looks like before work begins. A target like "increase affective commitment scores in the logistics team by 0.4 points over two survey cycles" is actionable. "Improve morale" is not.

- Involve employees in solution design. Teams that help design the interventions are more likely to trust them and sustain them. Optional working sessions or small-group feedback rounds after the survey give employees agency in what comes next.

- Communicate progress regularly. Don't wait until the next survey to update employees on committed actions. A brief quarterly update, even a simple summary of what's been done and what's next, signals that the process is real and ongoing.

Common commitment-building interventions

The right intervention depends on what your data surfaced, but several consistently prove their worth across organizations and workforce types.

- Recognition programs that reach everyone. Workhuman® iQ™ data consistently shows that employees who feel recognized are more likely to stay and more likely to identify emotionally with the organization. If your commitment scores are low and your recognition data is thin, that connection is worth addressing directly.

- Work arrangement alignment. When employees work in formats that match their preferences, Workhuman® research finds they're significantly more likely to feel connected to organizational culture and less likely to be planning a job search. If your survey flags remote or hybrid employees as a retention risk, explore whether current arrangements actually reflect what those employees want.

- Career development conversations. Low scores on growth and advancement items call for a structural response, not just better communication. That means managers holding real development conversations with documented next steps, not annual reviews that sit in a drawer.

- Manager capability investment. Because manager quality predicts team-level commitment more reliably than almost any other single variable, any meaningful commitment-building effort has to include manager development, not just organization-wide programs.

Measuring impact

Don't wait for the next annual survey to know whether your interventions are working.

- Track leading indicators such as voluntary turnover rates, absenteeism, and eNPS on a monthly or quarterly basis. These shift faster than survey scores and give you early confirmation that something is changing.

- Use pulse surveys between full commitment survey cycles to measure movement on specific focus areas rather than the full instrument. Workhuman® Moodtracker is built for exactly this role: with pulse surveys that determine who should be surveyed and when, then connect those sentiment signals to the recognition and engagement data already living in your Workhuman ecosystem.

- Report back to the employees who participated. Closing the loop, by showing what changed between survey cycles and attributing it to what employees told you, is the single most effective thing you can do to build trust in the process and boost participation next time.

Survey tools and templates

The right tool depends on what you're trying to accomplish, how large your workforce is, and whether you need enterprise-grade analytics or a lightweight starting point. A free survey tool can work well for a small team running its first commitment pulse.

Start with your requirements, then match the platform to them.

Survey platform selection criteria

| Criteria | What to look for |

| Anonymity and data security | Configurable response thresholds (typically 5+ respondents), encryption, GDPR/CCPA compliance, and clear data retention policies |

| Mobile responsiveness | Fully functional on mobile without degraded experience; accessible for deskless and distributed workers |

| Analytics and reporting | Automated score breakdowns by dimension, trend tracking, exportable dashboards and segment filtering |

| HRIS and ATS integrations | Native connectors to platforms like Workday, SAP SuccessFactors, or ADP to reduce manual data matching |

| Cost structure and scalability | Total cost of ownership, including setup, licensing per respondent or seat, and support tier |

| Customer support and implementation | Availability of onboarding support, documentation quality, and responsiveness for troubleshooting |

Commonly evaluated platforms include Workhuman®, Qualtrics, Culture Amp, Glint (now part of Microsoft Viva), Lattice, Leapsome, SurveyMonkey Enterprise, and Typeform. G2 and Capterra both publish regularly updated user ratings and feature comparisons across this category if you need side-by-side detail.

For organizations that want survey data connected to the rest of their people data, Workhuman® Moodtracker integrates with the broader Workhuman Cloud — pairing pulse survey sentiment with recognition signals, participation trends, and Workhuman iQ analytics to surface patterns that standalone survey tools miss.

Frequently Asked Questions

How often should we survey employee commitment?

Most organizations run a full commitment survey annually or biannually. Quarterly pulse surveys work well for tracking movement on specific focus areas between full cycles. The cadence should match your capacity to act on results; surveying more frequently than you can respond to erodes employee trust in the process.

What's a good response rate for commitment surveys?

A response rate of 70% or higher is generally considered strong for organization-wide commitment surveys. Rates below 50% should prompt investigation, as low participation in specific teams or tenure bands often signals disengagement before you've analyzed a single response. Clear communication about purpose and confidentiality consistently improves participation.

Should commitment surveys be anonymous or confidential?

Anonymous surveys, where responses cannot be traced to individuals, produce more honest data than confidential ones, where identities are known but protected. Research in organizational psychology consistently shows employees answer more candidly when anonymity is credible. Suppress results for groups below five respondents to protect individuals and reinforce that trust.

What do we do if commitment scores are very low?

Start by segmenting results to identify whether low scores are organization-wide or concentrated in specific teams, levels, or locations.

Communicate findings honestly to employees, prioritize two to three high-impact drivers based on your data, assign named owners to each focus area, and set measurable targets before launching any intervention. Silence after low scores accelerates the problem.

Conclusion

Employee commitment surveys only create value when they lead to action. The goal is not just to measure how employees feel, but to understand what’s driving loyalty, retention risk, and long-term connection to the organization.

When you ask the right questions, analyze the results thoughtfully, and follow through with visible changes, you turn employee feedback into a more committed workforce and better business decisions.

Ryan Stoltz

Ryan is a search marketing manager and content strategist at Workhuman where he writes on the next evolution of the workplace. Outside of the workplace, he's a diehard 49ers fan, comedy junkie, and has trouble avoiding sweets on a nightly basis.

Recommended for you