Why Recognition Is the Missing Link in AI Adoption

Table of contents

- Defining AI adoption

- AI adoption challenges

- Reinforcing AI adoption with recognition

- 3 reasons AI adoption stalls – and how recognition helps

- Change management for AI adoption

- How to measure AI adoption

- AI adoption metrics that actually matter

- AI adoption best practices

- AI stewardship: Building a human-centric, sustainable capability

TL;DR: AI adoption stalls when people don’t trust the tools, don’t feel safe using them, or can’t connect AI work to real priorities. Recognition helps by reinforcing the behaviors you want to spread – and giving leaders visibility into whether adoption is actually taking hold.

If you’re leading AI adoption or AI transformation right now – or maybe just trying to help people use AI in a more efficient and psychologically safe way – you’ve probably noticed something frustrating: the technology may be moving fast, but behavior change is moving very, very slowly.

Tools get rolled out. Training gets scheduled. Maybe even pilots get kicked off. And then, a few months later, leaders are still asking the same questions:

- Why isn’t this showing up in the work the way we expected?

- How can we increase AI adoption across our workforce?

- How can we make gains in AI adoption that translate to real outcomes?

The answer, usually, is the human glue: clarity, confidence, and commitment.

Defining AI adoption

AI adoption is the behavioral side of AI transformation. It’s the point where people use AI consistently in real workflows – with the confidence, judgment, and guardrails to do it well. Rolling out tools and training is the start; adoption is when it becomes normal, repeatable, and useful.

Rather than simply a technology goal, adoption is about the human behavior and human beliefs that underwrite change. The outcome of successful AI adoption is that your workforce will feel connected to the initiative and clear on how it relates to their work and their future – with clear expectations for judgment, risk, and accountability.

People can understand the strategy and still feel detached from it. That’s the gap a lot of leaders are running into with AI. In our recent Workhuman Global Research Study: Recognition as an Engine for Strategy, we found that while 77% of people said they understand their company’s strategic initiatives, only 39% said that they were very personally invested in those initiatives. For many companies (big and small), AI is one of those initiatives.

AI adoption challenges

Most CEOs we talk to aren’t confused about what’s happening. They’ll say it plainly: the bottleneck isn’t the model – it’s the humans around it. People don’t trust outputs yet. Managers don’t know what “good” looks like. And employees are doing the math on what AI means for their job, their reputation, and their future. Too many organizations still treat adoption like a one-time enablement effort, instead embarking on ongoing change management to shift how work gets done.

What organizations are rapidly realizing is that AI adoption isn’t really a technology or skills problem. Not anymore. In most organizations, the bigger blockers are attitudes, behaviors, and trust.

16% of US workers surveyed believed the biggest barrier to AI adoption is an ‘unclear use case or value proposition’ followed by ‘legal, compliance or privacy concerns’ (15%). - GallupOpens in a new tab

That’s why, more often than not, the responsibility lands with Human Resources to “bring people along.” Not because HR owns the AI strategy, but because HR manages the human system: capability-building, manager reinforcement, culture, change adoption, and the guardrails that make people feel safe enough to engage.

You can give people access to tools and a prompt library and still get shallow adoption if they’re thinking:

- If I use AI and it’s wrong, I’ll get blamed.

- If I don’t use AI, I’ll get blamed… or left behind.

- If I adopt AI well, am I training my replacement?

Until those questions have answers people can live with, AI initiatives are unlikely to fully take root.

So where can you actually start when the work is less about rollout and more about earning confidence? In fact, HR is well-positioned to make AI adoption intrinsically human, visible, and safe - by reducing friction, by reinforcing the behaviors you want to spread, and by naming what responsible, high-value AI use looks like in the context of real work.

Two in five employees believe AI could replace jobs in their area, and over half (54%) are wary of trusting AI. — KPMGOpens in a new tab

You might also like: Human-AI Collaboration: Shaping the Future of Intelligent Partnerships

Reinforcing AI adoption with recognition

When AI is new, employees aren’t waiting for another message about transformation. They’re watching what gets reinforced. What gets called out publicly. What gets rewarded. What gets treated as a smart risk versus a career risk.

Which means the most practical place to start is with recognition. Recognition is positive reinforcement at scale, and one of the few mechanisms companies already have that works in the flow of work to encourage behavior.

Recognition lets leaders show – quickly and credibly – what “good” looks like as people learn new tools, new judgment calls, and new ways of working.

Used that way, recognition does three important things at once:

- It makes AI adoption concrete by spotlighting real examples of role-specific use, not abstract encouragement.

- It builds trust by reinforcing responsible behavior and good judgment around AI, especially when the output is wrong or unclear.

- And it creates social proof, so adoption spreads through real work stories instead of top-down directives.

Positive reinforcement like this is how you move people from “I’m not sure what’s expected here” to “I can see what success looks like, and it’s safe to participate.” In our 2025 global alignment research, for employees who had been recognized in the past week, alignment with strategic initiatives rose 122%.

Source: Workhuman Global Research Study: Recognition as an Engine for Strategy

That combination matters for AI, because people don’t adopt new ways of working when they feel exposed or unsure how their efforts connect to what the business actually wants.

3 reasons AI adoption stalls – and how recognition helps

Across organizations, AI adoption tends to stall around three questions:

You might also like: AI and the Future of Work: How Emerging Technologies Are Reshaping the Workforce

Is AI adoption useful for my work?

This is often the first point of failure, and it’s usually not philosophical. It’s practical. People try AI and immediately hit friction: the tool isn’t integrated, the workflow doesn’t change, the output needs so much checking it feels like extra work, and the value stays theoretical.

When that happens, adoption doesn’t become a movement. It becomes a handful of personal experiments—some visible, many not.

This is one place recognition can make a real difference. It gives teams concrete, role-specific examples of where AI is actually improving work, and it turns those examples into something others can copy. The goal isn’t to celebrate novelty. It’s to normalize the patterns that lead to repeatable wins: testing a tool against an existing process, sharing a simple template, or documenting where AI helps and where it doesn’t.

A recognition message might look like this: “Shoutout to Maria for piloting the AI summarization tool on the ACME account. She tested it against our existing notes process, flagged where it lost context, and shared a template the team can reuse. That’s the kind of practical experimentation that makes adoption real.”

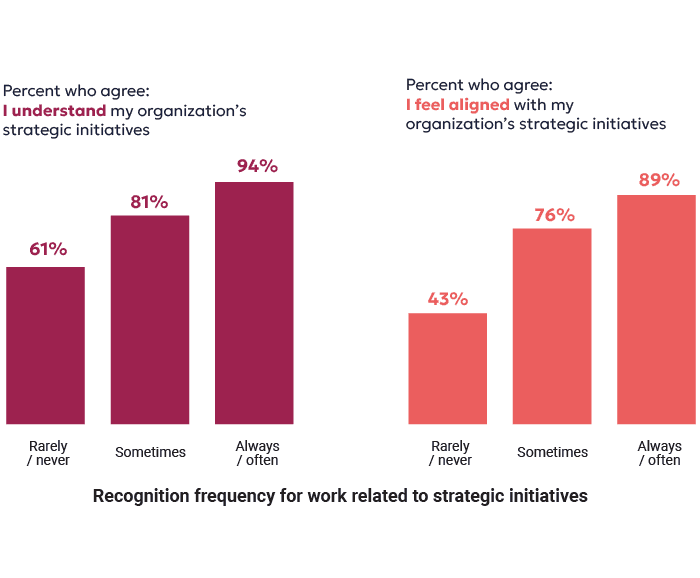

Source: Workhuman Global Research Study: Recognition as an Engine for Strategy

Is AI adoption safe here?

Even when AI is useful, adoption gets strange fast if people don’t feel safe using it. Not “safe” in the cyber sense, but safe in the human sense: safe to ask a basic question, safe to challenge an output, safe to admit something went wrong, safe to learn out loud without taking a reputation hit.

This is where many organizations assume the answer is more training. But more training doesn’t resolve fear. What resolves fear is clarity—reinforced over time—about what’s encouraged, what good judgment looks like, and what happens when the output is wrong.

Recognition is one of the simplest ways to reinforce those norms without adding more policy. It lets leaders and managers reward responsible AI use: validating outputs, catching errors early, escalating risk, and improving prompts or workflows so others don’t repeat the same mistake.

A recognition message might look like this: “Thanks to Jordan for catching the error in the draft before it went to the customer and writing up what went wrong. The most important AI skill right now is judgment – knowing when to trust the output and when to challenge it.”

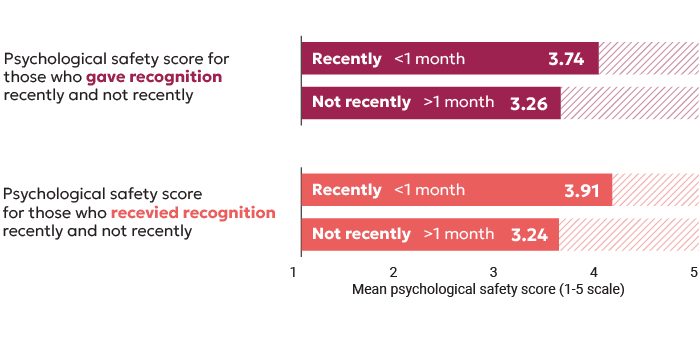

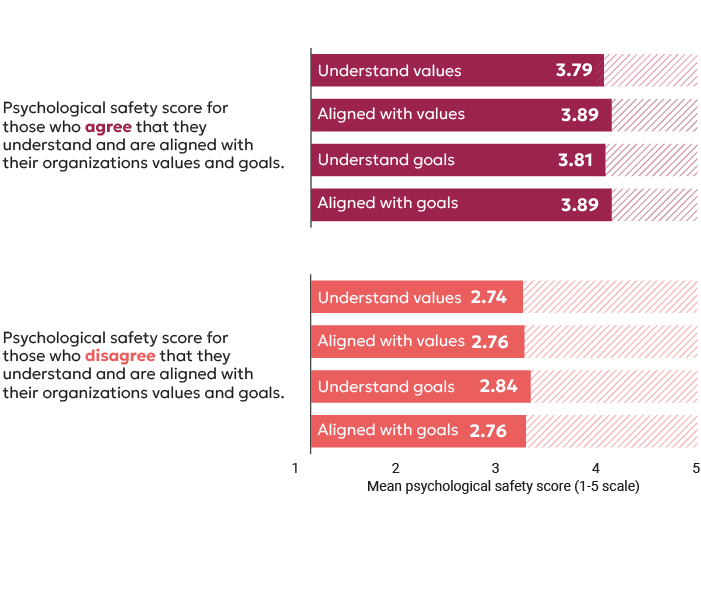

This reinforcement effect also shows up in the data. In our alignment research, employees who had been recognized in the past month reported 21% higher psychological safety. The same pattern holds for alignment and understanding: when recognition is recent, people are more likely to feel connected to where the organization is going and confident enough to learn in the open. That’s exactly the climate AI adoption needs if you want usage to move beyond a few early adopters.

Source: Workhuman Global Research Study: Recognition as an Engine for Strategy

Does AI adoption really matter to my work?

This is where AI efforts can quietly die. Not because the tools are bad, but because the organization never makes the shift from “we’re experimenting” to “this is how we work now.”

When strategy doesn’t cascade, people can’t tell what matters, managers don’t know what to reinforce, and AI becomes another initiative employees assume will fade. The result is familiar: pilots happen, activity increases, but behavior change doesn’t spread.

Recognition helps here by translating priorities into visible examples. It answers “does this matter?” by showing what counts—especially when recognition is tied to the outcomes the business is actually driving.

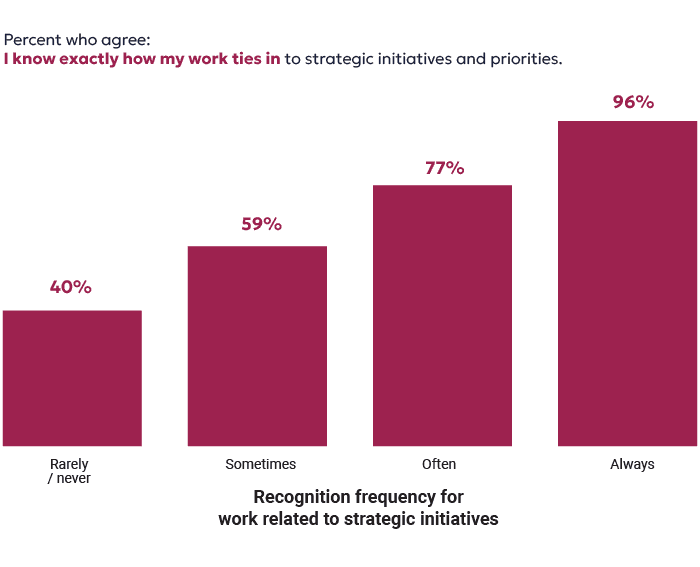

Our research shows how strong that effect is. When employees are often or always recognized for work tied to strategic initiatives, they are 102% more likely to feel aligned to those initiatives. And when recognition is linked to strategic initiatives and goals, employees are also 129% more likely to say they know exactly how their work contributes.

A recognition message might look like this: “Kudos to Priya for updating the HR workflow after the AI pilot instead of just using the new tool inside the same old process. That change in handoffs reduced manager workloads and helped us hit the cycle-time target on performance management we’ve been driving toward.”

Taken together, these three questions explain why AI adoption can feel so uneven. People aren’t resisting the future. They’re looking for proof: proof that AI helps, proof that it’s safe, and proof that it matters here. Recognition is one of the most practical ways to create that proof at scale and help people feel more invested in AI priorities.

Change management for AI adoption

AI adoption may be new for most companies, but fundamentally, it is a change‑management process, one that requires clear expectations, psychologically safe learning, ongoing reinforcement, and visibility into whether behaviors are actually shifting. The good news is, you've probably been here before. Your AI transformation will follow a predictable, familiar change‑management arc: integrating AI into real workflows, identifying role‑specific use cases, equipping people through training and coaching, and then reinforcing behaviors in the flow of work through recognition and measurement.

And that means recognition can play the same role here as in any cultural or behavioral shift: as a culture mechanism that helps leaders socialize ideas, encourage behavior, and track progress against change management goals.

Source: Workhuman Global Research Study: Recognition as an Engine for Strategy

How to measure AI adoption

Once you start reinforcing the right behaviors, something else happens: you can stop guessing and start measuring.

One of the hardest parts of AI transformation is that leaders don’t have clear line of sight. Usage data can tell you who logged in. It can’t tell you whether people are applying AI well, whether managers are reinforcing good judgment, or whether the organization is actually building shared habits instead of isolated pockets of experimentation.

By applying AI and natural language processing to recognition data, Workhuman iQ helps leaders answer practical questions they can’t get to with usage dashboards alone: Are we seeing responsible AI experimentation show up across teams, or only in pockets? Are people tying AI work to the priorities we’re pushing this quarter? Where is momentum building — and where are teams quietly opting out?

Recognition gives you a way to not only influence behavior, but also to see whether it’s spreading, where it’s stalling, and what “good” actually looks like across the organization. Every recognition is also a signal for leaders – a practical record of what’s happening, where initiatives are taking hold, and where friction is still winning.

With Topics and the Culture Hub, leaders can see which priorities and behaviors are showing up most in day-to-day recognition, as NLP and keywords tie behavior to company goals. Several Workhuman clients have even created recognition award reasons tied directly to AI initiatives. With analytics and AI Assistant, leaders can also quickly answer questions and surface patterns in impact, skills, and contribution — and in Team Overview, individual managers can also spot how work is actually flowing across networks.

The result is a real-time view of adoption as behavior, a sort of MRI of your organization that shows exactly what people are working on in real time – and who is most aligned to the work that matters.

Sanofi: Making AI adoption stick at scale

Sanofi provides a compelling example. Raj Verma, Chief Culture, Inclusion, and Employee Experience Officer, shares how they’re embedding AI by activating trust, belonging, and shared values. This story illustrates how culture and recognition quietly do the hard work of transformation, reducing uncertainty, reinforcing shared values, and creating conditions where adoption is possible.

AI adoption metrics that actually matter

If you’re trying to understand whether AI adoption is really taking hold, the usual metrics only get you so far. Logins, licenses, and training completion rates can tell you who has access and who showed up. They can’t tell you whether people are using AI well, whether good judgment is spreading, or whether adoption is moving beyond a few early adopters.

The more useful question is: what behaviors are we actually seeing in the flow of work – and are they showing up broadly, consistently, and in ways that map to what the business cares about?

Here are five AI adoption metrics to consider, that get at that reality:

- Percentage of teams/employees recognized for AI workflow improvements

- Frequency of “judgment” behaviors being recognized (validation, risk escalation, correction)

- Frequency of AI-related Topics being recognized, and top contributors

- AI skills being exhibited across individuals and teams

- Manager reinforcement rates (how often managers recognize AI-related behaviors)

AI adoption best practices

If you want adoption that scales, recognize the behaviors that make scale possible. Here’s a checklist you can encourage managers to consider:

- Trying a role-specific use case for AI and sharing what worked (and what didn’t)

- Improving an AI workflow, not just using a tool inside an old process

- Validating outputs, catching errors, and making corrections visible

- Escalating privacy, compliance, or customer risk due to AI early rather than pushing it downstream where it can cause trust issues

- Teaching others AI through demos, templates, prompt libraries, or simple walkthroughs

- Partnering across teams to fix handoffs (where AI value often gets won or lost)

- Connecting AI use to outcomes that matter: quality, cycle time, customer experience, risk reduction

This is where recognition stops being generic encouragement and becomes a practical adoption lever. You’re reinforcing behaviors that build trust, reduce fear, and help people develop good judgment – without pretending AI is flawless or risk-free.

Once these behaviors are more visible and repeatable, you can turn recognition into a way to see whether adoption is sticking, and which teams or individuals are being recognized as the informal “translators” who help others learn.

AI stewardship: Building a human-centric, sustainable capability

AI is already reshaping how work gets done – but all too often unevenly or without a shared understanding of success. This creates a familiar tension: leaders expect momentum and ROI, while employees look to HR for clarity, fairness, and a future they can trust. But technology alone won’t deliver AI ROI – human systems do.

When asked how other leaders should approach AI transformation while keeping it human, Raj Verma's adviceOpens in a new tab was simple but profound: "You almost need to go slow to go fast."

This isn't about being cautious. It's about investing time upfront to build the cultural foundation so that when transformation accelerates, it sticks. Take a page from Sanofi's playbook:

- Start with belonging: Before rolling out AI, invest in creating a sense of belonging where people feel safe to experiment and fail

- Embed recognition: Use your Workhuman recognition program to recognize the behaviors you want to see during transformation (e.g., curiosity, collaboration, willingness to learn)

- Make data visible: Surface inclusion and recognition patterns so leaders can coach toward the culture you're building

- Connect to purpose: Tie every AI use case back to how it helps drive organizational mission and goals

Ultimately, introducing AI successfully is less about controlling technology and a lot more about designing the human systems that allow AI to be adopted responsibly, effectively, and at scale.

Without stewardship, HR is left responding to downstream effects: role confusion, skills anxiety, declining safety, and stalled adoption. With it, AI becomes something the workforce can absorb, align around, and build on to achieve ROI from investments leaders have already committed to.

You might also like: Harnessing AI in the Workplace: Driving Productivity & Innovation

About the author

Darcy Jacobsen

Darcy is a passionate storyteller and champion of workforce transformation, human connection, and recognition-driven culture. As an author on the Workhuman Live Blog, she loves to connect deep research insights with modern workplace dynamics to uncover what really drives engagement, belonging, and happiness at work. With a background in communications and a master's in medieval history, she brings a unique perspective to her writing, taking deep dives into all topics around organizational psychology and the science of gratitude.